Resources For Professionals

This curated list is aimed at industry professionals seeking to introduce interventions in their design and development processes that will prompt consideration of ethical issues and potential consequences of a given design. Many of these resources offer online or printable toolkits and frameworks that professionals can engage with at varying stages of the development process to stimulate reflection that helps direct thought towards more value-centric design decisions.

If you’re unsure about the type of resource you’re looking for, we suggest using our searchable database to look through our entire resource library.

Created by: Jason Millar

- Access resource here

- Tags: FRAMEWORKS, DESIGN, AI, ETHICS

This is an academic article that raises the novel questions stemming from automation of decision making via robotics and AI. The article also includes a design tool meant to “aid ethicists, engineers, designers, and policymakers in the task of ethically evaluating a robot to identify design features that impact user autonomy, specifically by subjecting users to problematic forms of paternalism in the use context.”

Millar focuses on the following elements for consideration:

- 1. description of the robot,

- 2. the user experience under consideration,

- 3. the implicated design feature,

- 4. the designer’s interests,

- 5. other stakeholder interests,

- 6. potential human-human relationship models,

- 7. general design responses,

- 8. technical limitations

- 9. identified acceptable design features

Created by: Center for Data Science and Public Policy (University of Chicago)

From Aequitas

- Access resource here

- Tags: KITS, ACTIVITIES, GUIDED REFLECTIONS, EDI, AI, ETHICS, PROFESSIONAL CONSULTATIONS

From the creators: “The Bias Report is powered by Aequitas, an open-source bias audit toolkit for machine learning developers, analysts, and policymakers to audit machine learning models for discrimination and bias, and make informed and equitable decisions around developing and deploying predictive risk-assessment tools.”

The webpage allows you to upload and audit your own data, following a four-step process that involves:

1. Upload data

2. Select protected groups

3. Select fairness metrics

4. View bias report

Written by: Jonathan Stray et al, 2022

- Access resource here

- Tags: VISUAL TOOLS, GUIDED REFLECTIONS, AI, ETHICS

This article discusses recommender systems (“the algorithms which select, filter, and personalize content across many of the world’s largest platforms and apps”) in the context of how they enact different values in their implementation.

Appendix A of the article contains a Table of Values that measures an individual value (e.g. Usefulness, Liberty) against perspective from different cultures: “For each value, we provide possible interpretations in the context of recommender systems, some indicators that might be used to assess whether a system enacts or supports that value, and example designs that are relevant to that value.”

Created by: Salesforce

- Access resource here

- Tags: WORKSHOPS, GUIDED REFLECTIONS, DESIGN, EDI, ETHICS, PROFESSIONAL CONSULTATIONS

“Built with Intention is a toolkit that enables and empowers teams to consider all possible outcomes and impacts of the products and features they’re designing and developing–keeping ethics, inclusion, and accessibility at the forefront.”

It incorporates consequence scanning, visual mapping of intended/unintended consequences, and action plans. Designed to be held as a workshop, the toolkit is best used early on in the development process.

Created by: International Indigenous Sovereignty Interest Group (within the Research Data Alliance)

- Access here

- Tags: FRAMEWORKS, INDIGENOUS KNOWLEDGE, ETHICS, DATA

Based on the principles of Collective benefit, Authority to control, Responsibility, and Ethics (CARE) this resource explores the power imbalances inherent in data sharing, especially with how it conflicts with the autonomy of indigenous peoples.

In their own words: “The current movement toward open data and open science does not fully engage with Indigenous Peoples’ rights and interests. Existing principles within the open data movement (e.g. FAIR: findable, accessible, interoperable, reusable) primarily focus on characteristics of data that will facilitate increased data sharing among entities while ignoring power differentials and historical contexts. The emphasis on greater data sharing alone creates a tension for Indigenous Peoples who are also asserting greater control over the application and use of Indigenous data and Indigenous Knowledge for collective benefit… The CARE Principles for Indigenous Data Governance are people and purpose-oriented, reflecting the crucial role of data in advancing Indigenous innovation and self-determination.”

Created by: Dr. Max Marwedem (design lead, research fellow), Tapani Jokinen (strategic design consultant), Ronja Scholz (circular design researcher)

- Access resource here

- Tags: WORKSHOPS, DIGITAL MURAL, VISUAL TOOLS, DISCUSSION BOARDS, SUSTAINABILITY, DESIGN, KITS

The Circular Design Toolkit is a suite of tools and workshops aimed at introducing concepts of circular design and ecodesign into business workflows. Self-described as a ‘radical, restorative & regenerative approach to business’ on website splash page, the resource offers approximately 10 PDF-based tools and 3 complete workshops. These range from pitching the precepts of circular design, to advocating for a life-cycle model of technology, to envisioning a ‘big picture’ approach to doing business in a world threatened by scarcity and inequality.

To define the terms, the authors note that “Circular design relates directly to the concept of circular economies where economical growth is decoupled from the use of resources and resources are kept in a constant flow at their highest potential. Ecodesign therefore can be seen as one approach within circular design where the latter is a broader concept, applying design methods on entire product-service-systems and strategic business decisions for sustainable and circular business models” (“About Ecodesign”).

The core aim of the Ecodesign Circle is thus “to strengthen awareness and practical application of the ‘design approach’ to circular economy.”

From Microsoft Azure’s Responsible Innovation: A Best Practices Toolkit

- Access resource here

- Tags: ACTIVITIES, DESIGN

“Community jury, an adaptation of the citizen jury, is a technique where diverse stakeholders impacted by a technology are provided an opportunity to learn about a project, deliberate together, and give feedback on use cases and product design. This technique allows project teams to understand the perceptions and concerns of impacted stakeholders for effective collaboration.”

Sessions typically last 2-3 hours and include an overview/introduction of “the project team and explains the product’s purpose, along with potential use cases, benefits, and harms”; a discussion of key themes, where “jury members ask in-depth questions about aspects of the project, fielded by the moderator”; and then “deliberation and co-creation” or an individual anonymous survey. “Following the session: The moderator produces a study report that describes key insights, concerns, and potential solutions to the concerns.”

Created by: Forum for the Future

- Access resource here

- Tags: WORKSHOPS, GUIDED REFLECTIONS, SUSTAINABILITY

This resource includes a complementary report and guide for industry professionals to begin the process of transitioning their businesses to embrace models of sustainability. The creators argue that this transition is necessary for “social and environmental systems,” “planetary health,” and “human rights,” to create fairer ways of creating and distributing social values, and to build resilience across generations and geographies.

The report is organized into three sections, the last of which is aimed at providing practical tools and steps for businesses to reorient and redesign themselves to meet sustainability and equity targets, broadly defined.

The creators describe their aim as “to stretch business ambition to the next frontier of sustainability while simultaneously identifying some practical implications of how to start applying this to current activities and business functions. A compelling and clear synthesis is provided of current leading thinking around just and regenerative practice. It seeks to:

- 1. Create a robust definition of what being just and regenerative means for businesses and demonstrate the importance of this mindset shift for unlocking transformative action.

- 2. Introduce the Business Transformation Compass as a navigation guide for understanding and shifting the approach your business takes to change.

- 3. Draw out implications of this approach by describing the ‘critical shift’ needed on a range of issues and business functions.”

Created by: Observatory of Public Sector Innovation (OPSI)

- Access resource here

- Tags: ARCHIVE, KITS

OPSI describe their Toolkit Navigator as “a pathway to hundreds of freely available innovation toolkits, developed by authors in the public, private, academic and not-for-profit sectors. Whether you want to learn something, create something, or connect with others, the resource will guide you to toolkits, people and information needed to get you started.

It provides information about common methodologies used for public sector innovation, links to relevant government case studies applying these methodologies and access to a network of public sector innovators. The Toolkit Library contains resources suggested by the innovation community, community reviews and, where the publisher agrees, the editable source files for you to download and adapt to your own context.”

Created by: Doteveryone

- Access resource here

- Tags: GUIDED REFLECTIONS, DEVELOPMENT, ETHICS, DESIGN, SPECULATIVE DESIGN

Consequence Scanning is described as “A new Agile practice that fits within an iterative development cycle. This is a way for organisations to consider the potential consequences of their product or service on people, communities and the planet. This practice is an innovation tool that also provides an opportunity to mitigate or address potential harms or disasters before they happen.”

It is based on three main questions: “What are the intended and unintended consequences of this product or feature? What are the positive consequences we want to focus on? What are the consequences we want to mitigate?”

The toolkit includes a manual, printable headings, and prompts that raise consideration of potential and unintended consequences, making it ideal for groupwork and events.

Written by: Timnit Gebru, Jamie Morgenstern, Briana Vecchione, Jennifer Wortman Vaughan, Hanna Wallach, Hal Daumé III, Kate Crawford

- Access here

- Tags: GUIDED REFLECTIONS, DATA, ETHICS

This academic paper advocates for the use of datasheets for datasets for increasing transparency for machine learning research. Datasheets are used in the electronics industry to describe every component in a dataset’s operating characteristics, test results, recommended usage, and other information.

“We propose that dataset be accompanied with a datasheet that documents its motivation, composition, collection process, recommended uses, and so on. Datasheets for datasets have the potential to increase transparency and accountability within the machine learning community, mitigate unwanted societal biases in machine learning models, facilitate greater reproducibility of machine learning results, and help researchers and practitioners to select more appropriate datasets for their chosen tasks.”

The paper addresses questions related to: Motivation, Composition, Collection Process, Preprocessing/cleaning/labelling, Uses, Distribution, and Maintenance.

Created by: Google

- Access resource here

- Tags: WEB MODULES, GUIDED REFLECTIONS, CASE STUDIES, KITS

This resource is comprised of tools and articles offering term definitions, step-by-step guides, macro-analyses, and design principles oriented around a sense of well-being in the design process. Capstone activities in the “Workshop activities” page are described as taking approximately 2 hours to complete and offer practice spaces to experiment with the principles outlined in earlier modules.

In the developers’ own words, “our toolkit provides an overview of what digital wellbeing means at Google, and offers a set of principles, thought starters, and workshop activities to help you put wellbeing into the center of the products that you build” (“For Developers”).

With the resource you can learn “the definition of digital wellbeing and how it shows up in our 4 UX principles. Then, get to know each principle through research insights, guidelines, and case studies” (“For Developers”).

Created by: Design Kit

- Access resource here

- Tags: CASE STUDIES, DESIGN

Design Kits’ Case Studies page offers a series of stories about how their approach to human-centered design has resulted in beneficial results for different communities they’ve worked with.

Each story breaks down the different phases of the respective design process, from inspiration, ideation, and implementation. These accounts of responsible innovation can provide readers a strong understanding of the sort of methods and approaches needed to take on similar work.

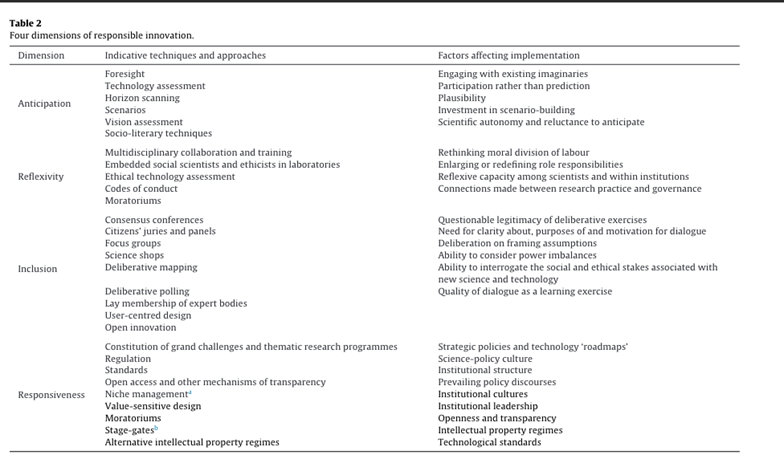

Written by: Jack Stilgoe, Richard Owen, and Phil Macnaghten (appears in Research Policy, 2013)

- Access here

- Tags: FRAMEWORKS

In this paper, the authors introduce the four dimensions of responsible innovation (RI): Anticipation, Reflexivity, Inclusion, and Responsiveness. In addition, they question how to integrate this model into research, production, and pedagogical contexts.

The article includes several tables for identifying and thinking through product design problems to supplement its argument, such as the one below:

Created by: Interaction Design Foundation

- Access resource here

- Tags: VISUAL TOOLS, GUIDED REFLECTIONS

This webpage provides instructions on how to create an empathy map exercise for your team. The creators explain: “An Empathy Map allows us to sum up our learning from engagements with people in the field of design research. The map provides four major areas in which to focus our attention on, thus providing an overview of a person’s experience. Empathy maps are also great as a background for the construction of the personas that you would often want to create later.”

“An Empathy Map consists of four quadrants. The four quadrants reflect four key traits, which the user demonstrated/possessed during the observation/research stage. The four quadrants refer to what the user: Said, Did, Thought, and Felt. It’s fairly easy to determine what the user said and did. However, determining what they thought and felt should be based on careful observations and analysis as to how they behaved and responded to certain activities, suggestions, conversations, etc.”

Created by: Batya Friedman

- Access resource here

- Tags: GAMES, GUIDED REFLECTION, DESIGN

An interactive tool, “Envisoning Cards are built upon a set of four envisioning criteria: stakeholders, time, values, and pervasiveness. Each card contains on one side a title and an evocative image related to the card theme; on the flip side, the card shows the envisioning criterion, elaborates on the theme, and provides a focused design activity.”

Created by: Artefact Group

- Access resource here

- Tags: KITS, ACTIVITIES, GUIDED REFLECTIONS, GAMES, ETHICS

Artefact Group describes the Ethical Explorer Pack as “A physical and digital toolkit to pioneer a new standard for building tech that’s safer, healthier, fairer, and more inclusive for all. Its inviting narrative and lightweight physicality keeps it on-hand and front-of-mind during important discussions.”

The pack includes a set of cards, the Risk Zone Cards, which “provoke thoughtful conversation around responsibility and impact, no matter where you are in a product’s lifecycle. Each card has three types of questions to help teams figure out where they stand, anticipate risk, or lead the way when it comes to radical change.“

In addition, the toolkit offers a Field Guide that “suggests five different activities to help both individuals and groups start their journey toward more ethical technology and gain buy-in within their organizations.”

From RRI Tools, a database of tools and articles on RRI for policy makers, the research community, education community, business and industry, and civil society organizations

- Access resource here

- Tags: GUIDED REFLECTIONS, AI, ETHICS

“This toolkit is designed to help governments (and others) use algorithms responsibly.”

It provides a description of high level concepts and terms and then offers users questions on a worksheet to help them characterize algorithms, assess their level of risk, and then mitigate this risk with reflection and specific methods.

Developed by: Shannon Vallor, Regis McKenna, and Dianne McKenna at the Markkula Center for Applied Ethics (Santa Clara University)

- Access resource here

- Tags: KITS, ACTIVITIES, GUIDED REFLECTIONS, ETHICS

This toolkit contains seven different tools that “represent concrete ways of implementing ethical reflection, deliberation, and judgment into tech industry engineering and design workflows. Used correctly, they will help to develop ethical engineering/design practices.”

Each generating their own activities, the seven tools are:

- Tool 1: Ethical Risk Sweeping;

- Tool 2: Ethical Pre-mortems and Post-mortems;

- Tool 3: Expanding the Ethical Circle;

- Tool 4: Case-based Analysis;

- Tool 5: Remembering the Ethical Benefits of Creative Work;

- Tool 6: Think About the Terrible People;

- Tool 7: Closing the Loop: Ethical Feedback and Iteration

Created by: Omidyar Networks

- Access resource here

- Tags: KITS, GUIDED REFLECTIONS, CASE STUDIES, DESIGN, PROFESSIONAL CONSULTATIONS

The Ethical OS Toolkit includes: “A checklist of 8 risk zones to help you identify the emerging areas of risk and social harm most critical for your team to start considering now; 14 scenarios to spark conversation and stretch your imagination about the long-term impacts of tech you’re building today and 7 future-proofing strategies to help you take ethical action today.”

Created by: Jelena Vulic, from the Critical Media Lab (University of Waterloo)

- Access resource here

- Tags: GAMES, DESIGN, EDI, SPECULATIVE DESIGN

Creator Jelena Vulic describes Exclusimon as “a small deck of cards I created to help designers consider the able-bodied biases they may have unconsciously integrated into their products, thus making them exclusionary of the disabled community. Similarly to [the Artefact Group’s] Tarot of Tech, it is meant to be used in a meeting setting, where designers should read – or in some cases, struggle to read – the cards carefully and ask themselves if their products share characteristics with any of the cards, and what they should do to change that.”

Created by: Design Kit

- Access resource here

- Tags: ACTIVITIES, DESIGN, PROFESSIONAL CONSULTATIONS

“Extremes and Mainstreams” is a a thought experiment to engage with for design groupwork. It is a 30-60 min group activity wherein participants identify “extreme” users of their product (i.e. those outside of the mainstream or target user group) and then conduct interviews with members of these groups to gain insight into how they’re using the product.

Created by: Microsoft

- Access resource here

- Tags: ACTIVITIES, GUIDED REFLECTIONS, DEVELOPMENT, AI, ETHICS, EDI

This Microsoft tool, accessed in Github, is a Python package that helps users to “assess and improve fairness of machine learning models.”

In their words, it “empowers developers of artificial intelligence (AI) systems to assess their system’s fairness and mitigate any observed unfairness issues. Fairlearn contains mitigation algorithms as well as a Jupyter widget for model assessment. Besides the source code, this repository also contains Jupyter notebooks with examples of Fairlearn usage.”

Created by: Open Roboethics Institute

- Access resource here

- Tags: GUIDED REFLECTIONS, AI, ETHICS, ACTIVITIES

This PDF toolkit, from the Open Roboethics Institute, provides a series of thought experiments in sequential order that help to ensure AI is produced ethically. The 25-page PDF includes a range of activities that walk users through how to: identify stakeholders and use case, discover risk ethics, listen to stakeholders, map personas and identify values, discover value tensions, discover tensions with stakeholder persona, discover tension in technology, and more.

Created by: FOSTER

- Access here

- Tags: WEB MODULES, ARCHIVES

With a free account, FOSTER offers access to Open Science courses which provide a range of content on responsible research and innovation (RRI) in science, data managements, text and data mining, and other areas related to tech and data.

In their own words: “The FOSTER portal is an e-learning platform that brings together the best training resources addressed to those who need to know more about Open Science, or need to develop strategies and skills for implementing Open Science practices in their daily workflows. Here you will find a growing collection of training materials. Many different users – from early-career researchers, to data managers, librarians, research administrators, and graduate schools – can benefit from the portal. In order to meet their needs, the existing materials will be extended from basic to more advanced-level resources. In addition, discipline-specific resources will be created.” (About)

From Microsoft Azure’s Responsible Innovation: A Best Practices Toolkit

- Access resource here

- Tags: VISUAL TOOLS, GUIDED REFLECTIONS, DESIGN, ETHICS

This resource from Microsoft Azure helps users to “anticipate the potential for harm, identify gaps in product that could put people at risk, and ultimately create approaches that proactively address harm.” It includes written resources that describe the foundations of harm assessment as well as several downloadable worksheets. There is a strong emphasis on upholding principles of Value Sensitive Design.

Created by: Innovate UK

- Access here

- Tags: GUIDED REFLECTION, SUSTAINABILITY

The Horizons Toolkit is comprised of a set of cards that can be used independently or with a group to drive innovative thinking based on both commercial market trends and long-term sustainability concerns. The cards have definitions and key stats for various important topics, prompting consideration of factors such as: ozone, fresh water, land use, trust, longterm-ism, evidence, mobility, health, shelter, and energy.

Innovate UK promote the kit as “a great tool to help you understand the big issues and trends coming your way, and stimulate thinking and discussion about how you should respond to them.”

Created by: Elrha

- Access resource here

- Tags: ACTIVITIES, GUIDED REFLECTIONS, ETHICS

This interactive PDF toolkit is contains several activities that can be printed and used in classroom or professional settings to stimulate reflection. The questions posed by the kit include: How will you support ethical humanitarian innovation across your work? What key values will you prioritise and operationalise?

As the creators explain, “The Toolkit is composed of five tools designed to work independently. However for best results, they can also be used in combination with each other. Each one targets an ethical vulnerability identified during the research lead by the Humanitarian Health Ethics Research Group.”

Created by: John F. Wood Centre for Business and Student Enterprise, University of Guelph

- Access resource here (requires self-enrollment)

- Tags: POST-SECONDARY PROGRAM, WEB MODULES, FRAMEWORKS

The Innovation Toolkit is accessed through self-enrollment. If you are not a University of Guelph student, faculty member, or staff, you will need to request access.

The university’s webpage describes the resource as “a process for problem-solving. It uses tools commonly found in entrepreneurship and innovation to help you tackle wicked problems on the issues that matter.

For over ten years, The John F. Wood Centre at the University of Guelph has led in community engagement, entrepreneurship, and innovation. We have adapted these tools from our work and research in those fields and codified them into a simple toolkit for use by students, entrepreneurs, business leaders, and community members. These tools are designed to support many groups. Students in particular will find further applications in first year seminars, fourth year capstone classes, skill-development graduate classes, industry-driven hackathons, pitch competitions, and everything in between.”

From Microsoft Azure’s Responsible Innovation: A Best Practices Toolkit

- Access resource here

- Tags: GAMES, AI, EDI, ETHICS, GUIDED REFLECTIONS

This resource from Microsoft Azure is a “card game and team-based activity that puts Microsoft’s AI principles of fairness, privacy and security, reliability and safety, transparency, inclusion, and accountability into action.” As explained in the accompanying YouTube video, the game asks participants to adopt different user perspectives and then write reviews of products, taking into account the particular experiences and values of their given perspective.

Judgment Call thus “provides an easy-to-use method for cultivating stakeholder empathy by imagining their scenarios.”

Created by: Observatory for Responsible Research and Innovation in ICT (ORBIT)

- Access resource here

- Tags: GUIDED REFLECTIONS, PROFESSIONAL CONSULTATIONS

“Extremes and Mainstreams” is a a thought experiment to engage with for design groupwork. It is a 30-60 min group activity wherein participants identify “extreme” users of their product (i.e. those outside of the mainstream or target user group) and then conduct interviews with members of these groups to gain insight into how they’re using the product.

Created by: Vincit

- Access resource here

- Tags: WEB MODULES, PROFESSIONAL CONSULTATIONS, GUIDED REFLECTIONS, KITS, VISUAL TOOLS, SUSTAINABILITY

This resource offers a set of toolkits and reflection and consultation activities primarily designed for established professionals and businesses. The creators are focused on promoting “planet centric design,” which they define as design that causes no harm to the planet.

The resource has a particular emphasis on app design and software, leading professionals through several meditations that assess the work they do, involved in processes all the way from designing to delivering their product. Most questions are fairly open-ended, though the toolkit capstone is to design actionable plans for breaking unsustainable practices and design workflows.

In the words of the developers, the resource is “designed to help you create products and services that do not harm the planet. It will help you create concepts that are desirable and profitable, but also put the planet in the centre of the design process. We recognise that this is a challenging task as planetary systems are complex and intertwined in ways that humanity does not fully understand. So, this toolkit helps you live up to the challenge, by offering activities to navigate complexity, collaborate and create better solutions for society that fit within the Earth’s boundaries.”

Created by: Polarity Partnerships

- Access resource here

- Tags: VISUAL TOOLS, GUIDED REFLECTIONS

The Polarity Map is intended to help groups and organizations identify and assess the value polarities at play in their innovative decision making. The tool then pushes users to solve these tensions with a “Both-and” rather than “Either-or” approach, thereby synthesizing critical reflection with practical action.

Some of the polarities you may have to engage with include Margin vs. Mission, Standardized vs. Configurable, Continuity vs. Transformation, and Candor vs Diplomacy.

Created by: Alexi Orchard, from the Critical Media Lab (University of Waterloo)

- Access resource here

- Tags: GAMES

“Extremes and Mainstreams” is a a thought experiment to engage with for design groupwork. It is a 30-60 min group activity wherein participants identify “extreme” users of their product (i.e. those outside of the mainstream or target user group) and then conduct interviews with members of these groups to gain insight into how they’re using the product.

Created by: Aleksander Franiczek, from the Critical Media Lab (University of Waterloo)

- Access resource here

- Tags: GAMES, SPECULATIVE DESIGN

From the creator: “Prometheus is a ‘Discourse Reflection Game’ designed to encourage engineers to reflect on the potential social and ethical consequences of tech designs. It’s a narratively-driven, text-based game with gameplay that requires players to make discursively engaged dialogue choices with three different characters from a diverse set of backgrounds… the game is intended to function as a reflective tool that demands an engagement with design that is less concerned about commercial value and optimization and more focused on recognizing how tech designs shape human experience.”

Created by: Stefan Kuhlmann, Jakob Edler, Gonzalo Ordóñez-Matamoros, Sally Randles, Bart Walhout, Clair Gough, Ralf Lindner

From PRISMA’s RRI Toolkit

- Access here

- Tags: FRAMEWORKS, CASE STUDIES, GUIDED REFLECTIONS

The ResAGorA Responsibility Navigator is a downloadable handbook that “supports decision-makers to govern [their] activities towards more conscious responsibility. What is considered ‘responsible’ will always be defined differently by different actor groups in research, innovation, and society – the Responsibility Navigator is designed to facilitate related debate, negotiation and learning in a constructive and productive way.”

The Responsibility Navigator supports the adoption of research measures in a way that promotes responsible innovation as an institutionalised ambition. It identifies ten governance principles and requirements (with case studies) and gives a set of questions related to each.

Through these means, “the framework can be used by actors facing dilemmas and complex situations impeding the governance of responsible research and innovation, and by actors wanting to reflect strategically on their own position as well as that of others in navigating R&I towards higher levels of responsible action.”

Created by: PwC

- Access resource here

- Tags: GUIDED REFLECTIONS, AI

This Responsible AI Diagnostic Survey (which takes approximately 10 minutes) asks questions about organisations’ “AI implementation and readiness.” Users are required to input their organization’s information in order to access the survey, which will assess for questions such as: “How responsible is the development, deployment and ongoing management of your AI solutions? Are you suitably prepared to produce robust, safe and beneficial enterprise AI solutions that are trusted by consumers, businesses and regulators to contribute positively to economic development and social good?”

Created by: Caroline Sinders, Margarita Noreiga, Edward Anthony, Xuedi Chen, Pedro Olivieria, Jay Mollica

- Access resource here

- Tags: WORKSHOPS, GUIDED REFLECTION, COMMUNITY SPACES

This PDF resource offers “a collection of insights and stories about how communities and activists are using tools to connect with each other, and how designers and engineers can support them.”

The document collates the contributions of 28 designers, educators, professionals, and activists regarding advice on how to design responsibly in our present moment, what kinds of tools to use, and how such tools can be improved.

Created by: Rafael Calvo (Imperial College London) and Dorian Peters (University of Cambridge)

- Access here

- Tags: ARCHIVES, WEB MODULES, KITS

The Responsbile Tech Design Library offers a collection of modules aimed at “wellbeing-supportive design” with “methods of ethical analysis [as] a powerful way forward toward achieving more responsible and humane technology” (“About”).

The web page primarily links users to a large collection of resources (some of which are included as separate entries on our site).

Created by: ThoughtWorks

- Access resource here

- Tags: KITS, ACTIVITIES, GUIDED REFLECTIONS, ETHICS

The Responsible Tech Playbook features a range of tools, techniques, and thought exercises that can be applied “to help you and your team be more inclusive, aware of bias, transparent and to mitigate negative inadvertent consequences.” The resource can thus help spark conversation and initiate changes in perspective.

The Playbook’s tools range across several topics, including: Agile Threat Modeling, Consequence Scanning, Data Ethics Canvas, Failure Modes & Effects Analysis, Flourishing Business Model Canvas, InterpretML, a Materiality Matrix Assessment, Responsible Strategy, and Unintended Consequences.

The webpage also offers a link to a recorded webinar from leaders and practitioners of responsible tech thinking, “including a showcase of the best tools designed to help you think through the implications of the products you are building.”

Created by: RiEcoLab

- Access here (individual toolkits accessed through Google Drive)

- Tags: KITS, WEB MODULES, ACTIVITIES, FRAMEWORKS, GUIDED REFLECTIONS, ARCHIVES

With this resource, RIEcoLab offers a series of training modules that offer free certification, one to one mentorship and support schemes for student researchers and academic and non-academic professionals, and specialized mentorship for startups and scaleups.

Each toolkit contains thought exercises, checklists, and frameworks that can be applied modularly. The toolkits focus on a range of topics, including:

- Toolkit 1: Entrepreneurial discovery;

- Toolkit 2: Setting up responsible & impactful knowledge and technology transfer office;

- Toolkit 3: Responsible research and innovation;

- Toolkit 4: Investment and financing;

- Toolkit 5: Inclusive KPIs for impactful universities;

- Toolkit 6: Entrepreneurship, Innovation & Stakeholders

Created by: Observatory for Responsible Research and Innovation in ICT (ORBIT)

- Access resource here

- Tags: GUIDED REFLECTIONS, PROFESSIONAL CONSULTATIONS, SUSTAINABILITY

This resource “combines the EPSRC vision of RRI as expressed in the AREA framework with the highly influential view of RRI as proposed by the European commission that emphasises six different keys (gender, science education, open access, ethics, public engagement and governance).” Users fill out a webform and answer a series of prompts related to their Sustainable Development Goals (SDGs) and Technological Readiness Levels.

As ORBIT explains: “This tool uses the Sustainable Development Goals as a widely adopted measure of social ‘goods’ and therefore a useful metric against which to measure the potential impact of a technology and assess the level of required responsible innovation work. The ‘impact’ encompasses both positive and negative impact.”

From RRI Tools, a database of tools and articles on RRI for policy makers, the research community, education community, business and industry, and civil society organizations

- Access here

- Tags: GUIDED REFLECTIONS, ETHICS, EDI

The RRI Self-Reflection Tool stimulates reflection and inspiration for innovation research “by providing questions organised according to the RRI Policy Agendas: Ethics, Gender Equality, Governance, Open Access, Public Engagement and Science Education.”

It is available in an interactive form for free online or can be engaged with through a downloadable template.

Written by: Indigenous Corporate Training Inc

- Access here

- TAGS: GUIDED REFLECTIONS, SUSTAINABILITY, INDIGENOUS KNOWLEDGE

This blog entry introduces The Seventh Generation Principle, drawing on Indigenous understandings that can help innovation researchers internalize that their work should not only consider stakeholders in the present moment but shape a better future and community for generations to come.

“The Seventh Generation Principle is based on an ancient Haudenosaunee (Iroquois) philosophy that the decisions we make today should result in a sustainable world seven generations into the future.”

Created by: Leyla Acaroglu et al.

- Access resource here

- Tags: WEB MODULES, WORKSHOPS, GAMES, ARCHIVES, SUSTAINABILITY, KITS

This webpage features a collection of freely accessible toolkits aimed at product design, reorienting product lifecycle outlooks, and encouraging personal responsibility with sustainability goals. Written from an industry perspective, Acaroglu and her research team use terms such as “rebellious” and “disruptive” to describe their tech interventions. The team’s goal is to “support change-makers in adopting the skills needed to help transform the global economy into a circular and sustainable one by design.”

Many toolkits are included, but the two summarized below stand out in their adherence to Critical By Design’s ethos.

The Circular Economy ReDesign Workshop Kit: “This free toolkit has been designed after thousands of hours of workshops and trainings with groups around the world. Designed to support a flow from traditional linear thinking through to circular and sustainable solutions, the workshop kit includes 6 worksheets that step you through the process of 1) understanding a linear system through doing a product life cycle thinking exploration, 2) identifying areas of intervention that could be adopted with this current model, 3) exploring circular business strategies, 4) looking at sustainable redesign options and then ideating a series of proposition that 5) can be converted into a new circular flow and finally 6) do a quick product viability assessment to see how viable the new circular solution is.”

ChangeMakers Lab Card Game: “This universal card deck was developed as part of the ChangeMakers Lab, with 56 cards and 36 activities that can be downloaded and used by anyone to advance sustainability and creativity.”

Created by: Alex Crowfoot

- Access resource here

- Tags: DISCUSSION BOARDS, GUIDED REFLECTION, MODULES, DESIGN, SUSTAINABILITY

Sustainable Design Tools offers multiple interactive tools particularly aimed at organisations first looking to get started on working towards a circular economy and transition design. Using these tools in a team environment can help approach answers to tough questions such as: “What is our impact?” and “How might we reduce it?”

The tools are primarily informed by environmentalism and sustainability in designing technology and products. It contains six illustrated models for approaching business practices and research accompanied with discussion and guided reflection activities. The resources are designed to encourage more communication between designers and stakeholders, more critical research and consultation pre-design, and an assessment of the lifecycle and social impacts of technology.

Created by: Artefact Group

- Access here

- Tags: GAMES, GUIDED REFLECTION, DESIGN, SPECULATIVE DESIGN

Inspired by the visual and conceptual format of tarot cards, “The Tarot Cards of Tech are a set of provocations designed to help creators more fully consider the impact of technology. They’ll not only help you foresee unintended consequences–they can also reveal opportunities for creating positive change.”

Topics on the cards include “The Smash Hit,” “The Radio Star,” “The Siren,” “The Superfan,” etc., and encourage consideration of unforeseen factors or excluded stakeholders in the development of a design.

Interacting with the cards can be done either online through the interactive webpage or downloaded and printed for physical use in in-person workshops

Created by: Mark Abbott et al.

- Access resource here (sign-up required)

- Tags: WEB MODULES, GUIDED REFLECTIONS, ETHICS, DISCUSSION BOARDS

The Tech Stewardship Practice Program (TSPP) is a free, term-long online course that offers thorough engagement with ideas relating to responsible innovation. The program combines resources (in the form of videos from tech stewards and information documents), peer-to-peer interactions on discussion boards, and reflection submissions. The course offers a certificate upon completion that can be included on your resume or CV.

Some of the program’s standout resources include:

-

- Practice Behaviour One Pagers – a series of self-reflection questions focused around key themes of seeking purpose (“What opportunities do we see to both FOCUS AND BROADEN the positive outcomes we are working towards?”), taking responsibility (“What opportunities do we see to both take ACTION AND REFLECT critically as we work towards benefit for all?”), expanding inclusion (“What opportunities do we see to both DEEPEN AND WIDEN who and what is included in our efforts?”), and working to regenerate (“What opportunities do you see to both UTILIZE AND CULTIVATE the various systems with which you engage?”)

- Organizational Reflection Worksheet – an editable PDF worksheet that asks users to reflect on how often they or their companies engage in the positive side of the key themes noted above.

- Module Questions – a series of questions related to module content that are answered/discussed on the program’s Community Board.

Created by: Rathenau Institute

From PRISMA’s RRI Toolkit

- Access resource here

- Tags: GUIDED REFLECTIONS, CASE STUDIES, ETHICS

Aimed particularly at biotechnology researchers and specialists, PRISMA’s Techno-Moral Vignettes are designed to “help you gain insight in possible future moral aspects of your biotechnology research by elaborating future scenarios.”

Users can find “eighteen brief scenarios, describing possible futures made possible through synthetic biology. You can use these as starting points for moral deliberation, aided by a small number of simple questions that can guide your conversations: what issues are raised in each vignette, and what changes have been effected through the technology at issue.”

Created by: AI Ethics Lab

- Access resource here

- Tags: VISUAL TOOLS, ACTIVITIES, AI, ETHICS

Centered around the core principles of Autonomy, Harm-Benefit, and Justice, AI Ethics Lab recommends use of their Toolbox “to think through the ethical implications of the technologies that you are evaluating or creating. The Box is a simplified tool that lists important ethical principles and concerns, puts instrumental ethical principles in relation to core principles, helps visualize ethical strengths & weaknesses of technologies, and enables visual comparison of technologies.”

If you are interested in using Toolbox, it is recommended that you first read the manual.

Created by: Committee for Technological Innovation & Ethics (Komet)

- Access resource here

- Tags: GUIDED REFLECTION, WEB MODULES, FRAMEWORKS, ETHICS, DEVELOPMENT

As put by the creators: “This is a tool for a guided self-assessment. You will be asked a series of questions that will help you take a more responsible approach to your work. At the end of the self-assessment, the tool will provide you with a list of concrete actions. You can use the tool repeatedly in the course of your tech development work.” In addition, it helps its users consider both immediate and long-term risks towards encouraging responsible approaches for balancing ethical and practical perspectives. A Q&A and glossary are also included.

Komet claims the resource is “accessible for all technologies” and encourages users to “think of tech in the broadest sense: AI, biological life sciences, autonomous vehicles, etc.”

Created by: Google’s PAIR (People and AI Research) Initiative

From RRI Tools, a database of tools and articles on RRI for policy makers, the research community, education community, business and industry, and civil society organizations

- Access resource here

- Tags: GUIDED REFLECTIONS, AI

Google’s What-If Tool is intended as a pragmatic resource for developers of machine learning systems. It offers a visual interface for probing the behavior of trained machine learning models and requires minimal coding to use.

In addition to working as a machine learning diagnostic tool, it lets users “try on five different types of fairness.” The tool thus helps draw attention to the nuances and complexity of fairness. In the practical context of artificial intelligence systems, it asks: What do users want to count as fair?